Media Literacy Action needed

I spoke briefly in a debate recently Media Literacy the report of the House of Lords Select Committee on Communications and Digital : https://publications.parliament.uk/pa/ld5901/ldselect/ldcomm/163/163.pdf.

The same day the Government published its Media Literacy Action plan: https://www.gov.uk/government/publications/a-safe-informed-digital-nation/a-safe-informed-digital-nation

I then took part in a debate on the Curriculum and Assessment Review by Professor Becky Francis CBE: Building a world-class curriculum https://assets.publishing.service.gov.uk/media/690b96bbc22e4ed8b051854d/Curriculum_and_Assessment_Review_final_report_-_Building_a_world-class_curriculum_for_all.pdf and the Gvernment response to it:https://assets.publishing.service.gov.uk/media/690b2a4a14b040dfe82922ea/Government_response_to_the_Curriculum_and_Assessment_Review.pdf

It is far from clear that we are acting fast or thoroughly enough to enable what is called AI fluency in our children.

We are faced with a landscape of algorithmic manipulation, proliferating deepfakes, a torrent of disinformation and, of course, online fraud. The committee is right: a failure to prioritise media literacy is a threat not just to individuals but to social cohesion and democracy itself. In the era of generative AI, media literacy is, as the committee makes clear, a requirement for modern citizenship. Our current approach is indeed fragmented and underresourced and lacks strategic vision. Ofcom’s own evidence, highlighted by the committee, shows little improvement in core skills over six years. In that context, the Government’s claim in their response that they and Ofcom have met the mounting scale of the challenge is simply not credible.

I welcome the completed curriculum and assessment review, which commits the Government to publishing revised national curriculum content by spring 2027. However, as the committee recommends, media literacy should be embedded across the curriculum and teachers should receive sustained support. This should arrive earlier.

As the committee urges, we need media literacy to be prioritised across government, not bolted on at the margins. I very much hope that the Minister will be able to assure us that one of the key tests of the effectiveness of the new media literacy action plan will be whether that takes place.

The Government cannot simply continue to outsource their responsibility in this area to the regulator. Although I welcome Ofcom’s new three-year media literacy strategy and its tougher use of behavioural audits under the Online Safety Act, which the Government rightly highlight, it is deeply disappointing that, more than 20 years on, Ofcom still has not brought its definition of media literacy up to date by explicitly recognising critical thinking—although I detect slightly different language in the media literacy action plan. Ofcom should, as the committee says, set minimum standards for platforms’ media literacy activity and be empowered to hold them to account.

You cannot build media literacy on foundations that do not exist. As the committee and many stakeholders argue, we must treat connectivity as an essential utility and invest accordingly. The vision from the Liberal Democrats is empowered citizenship: not a nanny state that tells people what to think but a literate state that gives people the tools to think for themselves. That is, in essence, the spirit of the committee’s report.

I urge the Minister to treat this report not as suggestions but as an urgent road map. We need, as the committee sets out, a unified strategy, a robust and critical definition of media literacy and the digital infrastructure to underpin it all.

Finally, I say in closing that I believe the BBC is not the problem; it is part of the answer. I look forward to the Minister’s response.

My Lords, I thank the noble Lord, Lord Freyberg, for securing this debate and so brilliantly illustrating the “arts dividend” in education—the phrase used by Darren Henley, CEO of the Arts Council England. The Francis review contains important proposals, but the response to it falls short on the issue that will hugely determine our economic and democratic future: AI literacy. Media and digital literacy is, in Internet Matters’ own words, “a postcode lottery”.

I have three specific concerns. The first is institutional agility. I welcome the media literacy action plan published just 10 days ago, in particular the £24 million TechFirst youth programme and the continued investment in the National Centre for Computing Education. But the plan confirms what we feared: curriculum consultation will not begin until later this year. The revised programmes of study will not be published until spring 2027 and they will not be taught until September 2028. The Government’s own foreword acknowledges that one in seven adults avoids the internet altogether due to safety concerns. They cannot simultaneously diagnose that level of digital anxiety and offer a curriculum solution that is nearly three years away. We need to establish an AI in education advisory board, as suggested by Policy Connect in its report, Skills in the Age of AI, to provide real-time expert guidance, ensuring that the curriculum becomes a living document and is not a decade behind the technology.

My second concern is curriculum philosophy. AI literacy must be a mandatory cross-curriculum competence from age seven to 18, prioritising ethical use, critical thinking and the human-centred skills that AI cannot replace. All this is, of course, to be found in the arts and humanities. There is a democratic dimension that the Government cannot ignore. They intend to extend the franchise to 16 and 17 year-olds. Research by Internet Matters, confirmed by the Electoral Commission, shows that digital literacy is directly linked to young people’s capacity to engage meaningfully in democracy.

If the Government extend the franchise, they need to equip young people with the literacy to navigate the information environment.

My third concern is the teaching workforce. Teachers are the primary multiplier for these skills, yet 30% cite a lack of relevant training as a barrier and 21% cite a lack of up-to-date resources. AI literacy must be embedded in initial teacher training, the early career framework and national professional qualifications. The action plan’s commitments on teacher support are welcome but conspicuously vague.

I ask the Minister three questions. What provision will be made for children in school now, before 2028? Will the Government establish an AI in education advisory board? When will a funded plan to integrate AI competences into statutory teacher training be published? We cannot build an AI-ready economy on a digitally illiterate workforce. Education must come first, not last.

Lord C-J and the Lib Dems: Risk-Based Age Ratings, Not Blanket Bans: A Smarter Way to Protect Children Online

The House of Lords recently held an important debate on accrss of under 16's to social Media. Lord Nash proposed a total ban . We preferred something more risk based. We all want government action more quickly than the promised 3 month consultation.

This is what I said in the debate supporting the Liberal Democrat amendment to Lord Nash's proposal.:

My Lords, it is a pleasure to follow the noble Lord, Lord Knight. Like him, I have been involved in debating online safety issues—from the internet safety Green Paper through to the Joint Committee on the Draft Online Safety Bill, and then seeing the Online Safety Bill become an Act. I declare an interest as a consultant to DLA Piper on AI policy and regulation

I will speak in support of my noble friend Lord Mohammed of Tinsley’s Amendments 94B and 94C, which are amendments to Amendment 94A tabled by the noble Lord, Lord Nash. In doing so, I urge the House to strengthen his proposal by transforming it from a blanket ban into an effective, harms-based approach to social media regulation that can actually be implemented and enforced within the same timetable.

As we have heard, the Government have announced a three-month consultation on children’s social media use. That is a welcome demonstration that the Government recognise the importance of this issue and are willing to consider further action beyond the Online Safety Act. However, our amendments make it clear that we should not wait until summer, or even beyond, to act, as we have a workable, legally operable solution before us today. Far from weakening the proposal from the noble Lord, Lord Nash, our amendments are designed to make raising the age to 16 deliverable in practice, not just attractive in a headline.

I share the noble Lord’s diagnosis: we are facing a children’s mental health catastrophe, with young people exposed to misogyny, violence and addictive algorithms. I welcome the noble Lord’s bringing this critical issue before the House and strongly support his proposal for a default minimum age of 16. After 20 years of profiteering from our children’s attention, we need a reset. The voices of young people themselves are impossible to ignore. At the same time, tens of thousands of parents have reached out to us all, just in the past week, calling to raise the age—we cannot let them down.

The Government have announced that Ministers will visit Australia to learn from its approach. I urge them to learn the right lessons. Australia has taken the stance of banning social media for under-16s, with a current list of 10 platforms. However, their approach demonstrates three critical flaws that Amendment 94A, as drafted, would replicate and that we must avoid.

First, there is the definition problem. The Australian legislation has had to draw explicit lines that keep services such as WhatsApp, Google Classroom and many gaming platforms out of scope, to make the ban effective. The noble Lord, Lord Nash, has rightly recognised these difficulties by giving the Secretary of State the power to exclude platforms, but that simply moves the arbitrariness from a list in legislation to ministerial discretion. What criteria would the Secretary of State use? Our approach instead puts those decisions on a transparent, risk-based footing with Ofcom and the Children’s Commissioner, rather than in one pair of hands.

Secondly, there is the cliff-edge problem. The unamended approach of Amendment 94A risks protecting children in a sterile digital environment until their 16th birthday, and then suddenly flooding them with harmful content without having developed the digital literacy to cope.

As the joint statement from 42 children’s charities warns, children aged 16 would face a dangerous cliff edge when they start to use high-risk platforms. Our amendment addresses that.

Thirdly, this proposal risks taking a Dangerous Dogs Bill approach to regulation. Just as breed-specific legislation failed because it focused on the type of dog rather than dangerous behaviour, the Australian ban focuses on categories rather than risk. Because it is tied to the specific purpose of social interaction, the Australian ban currently excludes high-risk environments such as Roblox, Discord and many AI chatbots, even though children spend a large amount of time on those platforms. An arbitrary list based on what platforms do will not deal with the core issue of harm. The Molly Rose Foundation has rightly warned that this simply risks migrating bad actors, groomers and violent groups from banned platforms to permitted ones, and we will end up playing whack-a-mole with children’s safety. Our amendment is designed precisely to address that.

Our concerns are shared by the very organisations at the forefront of child safety. This weekend, 42 charities and experts, including the Molly Rose Foundation, the NSPCC, the Internet Watch Foundation, Childline, the Breck Foundation and the Centre for Protecting Women Online, issued a joint statement warning that

“‘social media bans’ are the wrong solution”.

They warn that blanket bans risk creating a false sense of safety and call instead for risk-based minimum ages and design duties that reflect the different levels of risk on different platforms. When the family of Molly Russell, whose tragic death galvanised this entire debate, warns against blanket bans and calls for targeted regulation, we must listen. Those are the organisations that pick up the pieces every day when things go wrong online. They are clear that a simple ban may feel satisfying, but it is the wrong tool and risks a dangerous false sense of safety.

Our amendments build on the foundation provided by the noble Lord, Lord Nash, while addressing these critical flaws. They would provide ready-made answers to many of the questions the Government’s promised consultation will raise about minimum ages, age verification, addictive design features and how to ensure that platforms take responsibility for child safety. We would retain the default minimum age of 16. Crucially, that would remain the law for every platform unless and until it proves against rigorous criteria that it is safe enough to merit a lower age rating. However, and this is the crucial improvement, platforms could be granted exemptions if—and only if—they can demonstrate to Ofcom and the Children’s Commissioner that they do not present a risk of harm.

Our amendments would create film-style age ratings for platforms. Safe educational platforms could be granted exemptions with appropriate minimum ages, and the criteria are rigorous. Platforms would have to demonstrate that they meet Ofcom’s guidance on risk-based minimum ages, protect children’s rights under the UN Convention on the Rights of the Child, have considered their impact on children’s mental health, have investigated whether their design encourages addictive use and have reviewed their algorithms for content recommendation and targeted advertising. So this is not a get-out clause for tech companies; it is tied directly to whether the actual design and algorithms on their platforms are safe for children. Crucially, exemptions are subject to periodic review and, if standards slip, the exemption can be revoked.

First, this prevents mitigating harms. If Discord or a gaming lobby presents a high risk, it would not qualify for exemption. If a platform proves it is safe, it becomes accessible. We would regulate risk to the child, not the type of technology.

Secondly, it incentivises safety by design. The Australian model tells platforms to build a wall to block children. This concern is shared by the Online Safety Act Network, representing 23 organisations whose focuses span child protection, suicide prevention and violence against women and girls. It warns that current implementation focuses on

“ex-post measures to reduce the … harm that has already occurred rather than upstream, content-neutral, ‘by-design’ interventions to seek to prevent it occurring in the first place”.

It explicitly calls for requiring platforms to address

“harms to children caused by addictive or compulsive design”—

precisely what our amendment mandates.

Thirdly, it is future-proof. We must prepare for a future that has already arrived—AI, chatbots and tomorrow’s technologies. Our risk-based approach allows Ofcom and the Children’s Commissioner to regulate emerging harms effectively, rather than playing catch-up with exemptions.

We should not adopt a blunt instrument that bans Wikipedia or education and helpline services by accident, drives children into high-risk gaming sites by omission or creates a dangerous cliff edge at 16 by design. We should not fall into the trap of regulating categories rather than harms, and we should not put the power to choose in one person’s hands, namely the Secretary of State.

Instead, let us build on the foundation provided by the noble Lord, Lord Nash, by empowering Ofcom and the Children’s Commissioner to implement a sophisticated world-leading system, one that protects children based on actual risk while allowing them to learn, communicate and develop digital resistance. I urge the House to support our amendments to Amendment 94A.

Media literacy has never been more urgent.

This is a speech I recently gave at the launch of the Digital Policy Alliance's new report on Media literacy in Education

With continuing Government efforts to see public services online alongside expanding AI usage, media literacy has never been more urgent. Debates surrounding media literacy typically focus on visible risks rather than the deeper structural issues that determine who cannot understand, interpret and contribute in the digital age.

I have the honour of serving as an Officer of the Digital Inclusion All-Party Parliamentary Group (APPG), and previously as Treasurer of the predecessor Data Poverty APPG. This issue—ensuring digital opportunities are universal- is crucial for many of us in Parliament.

The Urgent Case for Digital Inclusion

As many of us in this room know, digital inclusion is not an end in itself; it is a vital route to better education, to employment, to improved healthcare, and a key means of social connection. Beyond the social benefits, there are also huge economic benefits of achieving a fully digitally capable society. Research suggests that increased digital inclusion could result in a £13.7 billion uplift to UK GDP.

Yet, while the UK aspires to global digital leadership, digital exclusion remains a serious societal problem. The figures are sobering:

- 1.7 million households have no mobile or broadband internet at home.

- Up to a million people have cut back or cancelled internet packages in the past year as cost of living challenges bite.

- Around 2.4 million people are unable to complete a single basic digital task required to get online.

- Over 5 million employed adults cannot complete essential digital work tasks.

- Basic digital skills are set to become the UK’s largest skills gap by 2030.

- And four in ten households with children do not meet the Minimum Digital Living Standard (MDLS).

The consequence of this is that millions of people are prevented from living a full, active, and productive life, which is bad for them and bad for the country. This is why the core mission of the DPA—to tackle device, data, and skills poverty—is so essential.

Media Literacy: Addressing the Structural Roots of Exclusion

Today, the DPA is launching its Media Literacy Report, and its timing could not be more important. With continuing Government efforts to move public services online, coupled with the rapid expansion of AI usage, media literacy has never been more urgent.

The DPA report wisely moves beyond focusing solely on the visible risks of the internet, such as misinformation, and addresses the deeper structural issues. Media literacy is inextricably linked to digital exclusion: the ability to understand, interpret, and contribute in the digital age is determined by access to devices, socio-economic background, and school policy.

- School phone bans must be accompanied by extensive media literacy education, which is iterated and revisited at multiple stages.

- Teachers must receive meaningful training on media literacy.

- Parents must be supported by received accessible guidance on media literacy.

- Schools should consider peer-to-peer learning opportunities.

- Tech companies must disclose information on how recommendation algorithms function and select content.

- AI generated information must be labelled as such.

- Verification ticks should be removed from accounts spreading misinformation, especially related to health.

We risk consigning people to a world of second-class services if we do not provide the foundational skills required to engage critically, confidently, and safely with the online world. Crucially, the DPA’s work keeps those with lived experience of digital exclusion at the heart of the analysis, providing real-life stories from parents, teachers, and young people.

Tackling Data Poverty: The Affordability Challenge

One of the most immediate and significant barriers to inclusion is affordability—what we often refer to as data poverty. Two million households in the UK are currently struggling to pay for broadband, and Age UK hears from older people who find essential services—like checking bus times or dealing with benefits—impossible due to lack of digital confidence and the pressure to manage costs.

The current system relies heavily on broadband social tariffs as the primary fix, but uptake has been sluggish, with only 5% of eligible customers having signed up previously. This is due to confusion, low awareness, cost, and complexity.

The solution requires radical, coordinated action:

- Standardisation: All operators should offer social tariffs to an agreed industry standard on speed, price, and terms. This will make it easier for customers to compare and take advantage of these vital packages.

- Simplified Access: We welcome the work being done by the DWP to develop a consent model that uses Application Programming Interfaces (APIs) to allow internet service providers (ISPs) to confirm a customer's eligibility for benefits, such as Universal Credit. This drastically simplifies the application journey for the customer.

- Sustainable Funding: My colleagues in Parliament and I have been keen to explore innovative funding methods. One strong proposal is to reduce VAT on broadband social tariffs to align with other essential goods (at least 5% or 0%). It has been calculated that reinvesting the tax receipts received from VAT on all broadband into a social fund could provide an estimated £2.1 billion per year to provide all 6.8 million UK households receiving means-tested benefits with equitable access.

Creating a Systemic, Rights-Based Approach

If we are to achieve a 'Digital Britain by 2030', we need more than fragmented, short-term solutions. We need a systematic, rights-based approach.

First, we must demand better data and universal standards. The current definition of digital inclusion, based on whether someone has accessed the internet in the past three months, is completely outdated. We should replace this outdated ONS definition with a more holistic and up-to-date approach, such as the Minimum Digital Living Standard (MDLS). This gives the entire sector a common goal.

Second, we must formally recognize internet access as an essential utility. We should think of the internet as critical infrastructure, like the water or power system. This would ensure better consumer protection.

Third, we must embed offline and physical alternatives. While encouraging digital use, we must ensure that people who cannot or do not wish to get online—such as many older people who prefer interacting with services like banking in person—have adequate, easy-to-access, non-digital options. Essential services like telephone helplines for government services, such as HMRC, and the national broadcast TV signal must be protected so the digital divide is not widened further.

Fourth, we must empower local and community infrastructure. Tackling exclusion must happen on the ground. We need to boost digital inclusion hubs and support place-based initiatives. This involves increasing the capacity and use of libraries and community centres as digital support centres and providing free Wi-Fi provision in public spaces.

We should stand ready to support the Government's Digital Inclusion Action Plan, but we must continue to emphasize the need for a longer-term strategy that has central oversight, such as a dedicated cross-government unit, to ensure that every policy decision is digitally inclusive from the outset.

The commitment demonstrated by the Digital Poverty Alliance today, and by everyone in this room, proves that we can and must eliminate digital poverty and ensure no one is left behind.

A Defence of the Online Safety Act: Protecting Children While Ensuring Effective Implementation

Some recent commentary on the Online Safety Act seems to treat child protection online as an abstract policy preference. The evidence reveals something far more urgent. By age 11, 27% of children have already been exposed to pornography, with the average age of first exposure at just 13. Twitter (X) alone accounts for 41% of children’s pornography exposure, followed by dedicated sites at 37%.

The consequences are profound and measurable. Research shows that 79% of 18-21 year olds have seen content involving sexual violence before turning 18, and young people aged 16-21 are now more likely to assume that girls expect or enjoy physical aggression during sex. Close to half (47%) of all respondents aged 18-21 had experienced a violent sex act, with girls the most impacted.

When we know that childrens’ accounts on TikTok are shown harmful content every 39 seconds, with suicide content appearing within 2.6 minutes and eating disorder content within 8 minutes, the question is not whether we should act, but how we can act most effectively.

This is not “micromanaging” people’s rights - this is responding to a public health emergency that is reshaping an entire generation’s understanding of relationships, consent, and self-worth.

Abstract arguments about civil liberties need to be set against the voices of bereaved families who fought for the Online Safety Act . The parents of Molly Russell, Frankie Thomas, Olly Stephens, Archie Battersbee, Breck Bednar, and twenty other children who died following exposure to harmful online content did not campaign for theoretical freedoms - they campaigned for their children’s right to life itself.

These families faced years of stonewalling from tech companies who refused to provide basic information about the content their children had viewed before their deaths. The Act now requires platforms to support coroner investigations and provide clear processes for bereaved families to obtain answers. This is not authoritarianism - it is basic accountability

To repeal the Online Safety Act would indeed be a massive own-goal and a win for Elon Musk and the other tech giants who care nothing for our children’s safety. The protections of the Act were too hard won, and are simply too important, to turn our back on.

The conflation of regulating pornographic content with censoring legitimate information is neither accurate nor helpful, but we must remain vigilant against mission creep. As Victoria Collins MP and I have highlighted in our recent letter to the Secretary of State, supporting the Act’s core mission does not mean we should ignore legitimate concerns about its implementation. Parliament must retain its vital role in scrutinising how this legislation is being rolled out to ensure it achieves its intended purpose without unintended consequences.

There are significant issues emerging that Parliament must address:

Age Assurance Challenges: The concern that children may use VPNs to sidestep age verification systems is real, though it should not invalidate the protection provided to the majority who do not circumvent these measures. We need robust oversight to ensure age assurance measures are both effective and proportionate.

Overreach in Content Moderation: The age-gating of political content and categorisation of educational resources like Wikipedia represents a concerning drift from the Act’s original intent. The legislation was designed to protect children from harmful content, not to restrict access to legitimate political discourse or educational materials. Wikimedia’s legal challenge regarding its categorisation illustrates this. While Wikipedia’s concerns about volunteer safety and editorial integrity are legitimate, their challenge does not oppose the Online Safety Act as a whole, but rather seeks clarity about how its unique structure should be treated under the regulations.

Protecting Vulnerable Communities: When important forums dealing with LGBTQ+ rights, sexual health, or other sensitive support topics are inappropriately age-gated, we risk cutting off vital lifelines for young people who need them most. This contradicts the Act’s protective purpose.

Privacy and Data Protection: While the Act contains explicit privacy safeguards, ongoing vigilance is needed to ensure age assurance systems truly operate on privacy-preserving principles with robust data minimisation and security measures.

The solution to these implementation challenges is not repeal, but proper parliamentary oversight. Parliament needs the opportunity to review the Act’s implementation through post-legislative scrutiny and the chance to examine whether Ofcom is interpreting the legislation in line with its original intent and whether further legislative refinements may be necessary.

A cross-party Committee from both Houses, would provide the essential scrutiny needed to ensure the Act fulfils its central aim of keeping children safe online without unintended consequences.

Fundamentally and importantly, this approach aligns with core liberal principles. John Stuart Mill’s harm principle explicitly recognises that individual liberty must be constrained when it causes harm to others.

.

Lord C-J : We must put the highest duties on small risky sites

Many of us were horrified that the regulations implementing the provisions of the Online Safety Act proposed by the government on the advice of Ofcom did not put sites such as Telegram, 4Chan and 8Chan in the top category-Category 1-for duties under the Act .

As a result I tabled a regret motion:

" that this House regrets that the Regulations do not impose duties available under the parent Act on small, high-risk platforms where harmful content, often easily accessible to children, is propagated; calls on the Government to clarify which smaller platforms will no longer be covered by Ofcom’s illegal content code and which measures they will no longer be required to comply with; and calls on the Government to withdraw the Regulations and establish a revised definition of Category 1 services.”

This is what I said in opening the debate. The motion passed against the government by 86 to 55.

Those of us who were intimately involved with its passage hoped that the Online Safety Act would bring in a new era of digital regulation, but the Government’s and Ofcom’s handling of small but high-risk platforms threatens to undermine the Act’s fundamental purpose of creating a safer online environment. That is why I am moving this amendment, and I am very grateful to all noble Lords who are present and to those taking part.

The Government’s position is rendered even more baffling by their explicit awareness of the risks. Last September, the Secretary of State personally communicated concerns to Ofcom about the proliferation of harmful content, particularly regarding children’s access. Despite this acknowledged awareness, the regulatory framework remains fundamentally flawed in its approach to platform categorisation.

The parliamentary record clearly shows that cross-party support existed for a risk-based approach to platform categorisation, which became enshrined in law. The amendment to Schedule 11 from the noble Baroness, Lady Morgan—I am very pleased to see her in her place—specifically changed the requirement for category 1 from a size “and” functionality threshold to a size “or” functionality threshold. This modification was intended to ensure that Ofcom could bring smaller, high-risk platforms under appropriate regulatory scrutiny.

Subsequently, in September 2023, on consideration of Commons amendments, the Minister responsible for the Bill, the noble Lord, Lord Parkinson—I am pleased to see him in his place—made it clear what the impact was:

“I am grateful to my noble friend Lady Morgan of Cotes for her continued engagement on the issue of small but high-risk platforms. The Government were happy to accept her proposed changes to the rules for determining the conditions that establish which services will be designated as category 1 or 2B services. In making the regulations, the Secretary of State will now have the discretion to decide whether to set a threshold based on either the

number of users or the functionalities offered, or on both factors. Previously, the threshold had to be based on a combination of both”.—[Official Report, 19/9/23; col. 1339.

I do not think that could be clearer.

This Government’s and Ofcom’s decision to ignore this clear parliamentary intent is particularly troubling. The Southport tragedy serves as a stark reminder of the real-world consequences of inadequate online regulation. When hateful content fuels violence and civil unrest, the artificial distinction between large and small platforms becomes a dangerous regulatory gap. The Government and Ofcom seem to have failed to learn from these events.

At the heart of this issue seems to lie a misunderstanding of how harmful content proliferates online. The impact on vulnerable groups is particularly concerning. Suicide promotion forums, incel communities and platforms spreading racist content continue to operate with minimal oversight due to their size rather than their risk profile. This directly contradicts the Government’s stated commitment to halving violence against women and girls, and protecting children from harmful content online. The current regulatory framework creates a dangerous loophole that allows these harmful platforms to evade proper scrutiny.

The duties avoided by these smaller platforms are not trivial. They will escape requirements to publish transparency reports, enforce their terms of service and provide user empowerment tools. The absence of these requirements creates a significant gap in user protection and accountability.

Perhaps the most damning is the contradiction between the Government’s Draft Statement of Strategic Priorities for Online Safety, published last November, which emphasises effective regulation of small but risky services, and their and Ofcom’s implementation of categorisation thresholds that explicitly exclude these services from the highest level of scrutiny. Ofcom’s advice expressly disregarded—“discounted” is the phrase it used—the flexibility brought into the Act via the Morgan amendment, and advised that regulations should be laid that brought only large platforms into category 1. Its overcautious interpretation of the Act creates a situation where Ofcom recognises the risks but fails to recommend for itself the full range of tools necessary to address them effectively.

This is particularly important in respect of small, high-risk sites, such as suicide and self-harm sites, or sites which propagate racist or misogynistic abuse, where the extent of harm to users is significant. The Minister, I hope, will have seen the recent letter to the Prime Minister from a number of suicide, mental health and anti-hate charities on the issue of categorisation of these sites. This means that platforms such as 4chan, 8chan and Telegram, despite their documented role in spreading harmful content and co-ordinating malicious activities, escaped the full force of regulatory oversight simply due to their size. This creates an absurd situation where platforms known to pose significant risks to public safety receive less scrutiny than large platforms with more robust safety measures already in place.

The Government’s insistence that platforms should be “safe by design”, while simultaneously exempting high-risk platforms from category 1 requirements based

solely on size metrics, represents a fundamental contradiction and undermines what we were all convinced—and still are convinced—the Act was intended to achieve. Dame Melanie Dawes’s letter, in the aftermath of Southport, surely gives evidence enough of the dangers of some of the high-risk, smaller platforms.

Moreover, the Government’s approach fails to account for the dynamic nature of online risks. Harmful content and activities naturally migrate to platforms with lighter regulatory requirements. By creating this two-tier system, they have, in effect, signposted escape routes for bad actors seeking to evade meaningful oversight. This short-sighted approach could lead to the proliferation of smaller, high-risk platforms designed specifically to exploit these regulatory gaps. As the Minister mentioned, Ofcom has established a supervision task force for small but risky services, but that is no substitute for imposing the full force of category 1 duties on these platforms.

The situation is compounded by the fact that, while omitting these small but risky sites, category 1 seems to be sweeping up sites that are universally accepted as low-risk despite the number of users. Many sites with over 7 million users a month—including Wikipedia, a vital source of open knowledge and information in the UK—might be treated as a category 1 service, regardless of actual safety considerations. Again, we raised concerns during the passage of the Bill and received ministerial assurances. Wikipedia is particularly concerned about a potential obligation on it, if classified in category 1, to build a system that allows verified users to modify Wikipedia without any of the customary peer review.

Under Section 15(10), all verified users must be given an option to

“prevent non-verified users from interacting with content which that user generates, uploads or shares on the service”.

Wikipedia says that doing so would leave it open to widespread manipulation by malicious actors, since it depends on constant peer review by thousands of individuals around the world, some of whom would face harassment, imprisonment or physical harm if forced to disclose their identity purely to continue doing what they have done, so successfully, for the past 24 years.

This makes it doubly important for the Government and Ofcom to examine, and make use of, powers to more appropriately tailor the scope and reach of the Act and the categorisations, to ensure that the UK does not put low-risk, low-resource, socially beneficial platforms in untenable positions.

There are key questions that Wikipedia believes the Government should answer. First, is a platform caught by the functionality criteria so long as it has any form of content recommender system anywhere on UK-accessible parts of the service, no matter how minor, infrequently used and ancillary that feature is?

Secondly, the scope of

“functionality for users to forward or share regulated user-generated content on the service with other users of that service”

is unclear, although it appears very broad. The draft regulations provide no guidance. What do the Government mean by this?

Thirdly, will Ofcom be able to reliably determine how many users a platform has? The Act does not define “user”, and the draft regulations do not clarify how the concept is to be understood, notably when it comes to counting non-human entities incorporated in the UK, as the Act seems to say would be necessary.

The Minister said in her letter of 7 February that the Government are open to keeping the categorisation thresholds under review, including the main consideration for category 1, to ensure that the regime is as effective as possible—and she repeated that today. But, at the same time, the Government seem to be denying that there is a legally robust or justifiable way of doing so under Schedule 11. How can both those propositions be true?

Can the Minister set out why the regulations, as drafted, do not follow the will of Parliament—accepted by the previous Government and written into the Act—that thresholds for categorisation can be based on risk or size? Ofcom’s advice to the Secretary of State contained just one paragraph explaining why it had ignored the will of Parliament—or, as the regulator called it, the

“recommendation that allowed for the categorisation of services by reference exclusively to functionalities and characteristics”.

Did the Secretary of State ask to see the legal advice on which this judgment was based? Did DSIT lawyers provide their own advice on whether Ofcom’s position was correct, especially in the light of the Southport riots?

How do the Government intend to assess whether Ofcom’s regulatory approach to small but high-harm sites is proving effective? Have any details been provided on Ofcom’s schedule of research about such sites? Do the Government expect Ofcom to take enforcement action against small but high-harm sites, and have they made an assessment of the likely timescales for enforcement action?

What account did the Government and Ofcom take of the interaction and interrelations between small and large platforms, including the use of social priming through online “superhighways”, as evidenced in the Antisemitism Policy Trust’s latest report, which showed that cross-platform links are being weaponised to lead users from mainstream platforms to racist, violent and anti-Semitic content within just one or two clicks?

The solution lies in more than mere technical adjustments to categorisation thresholds; it demands a fundamental rethinking of how we assess and regulate online risk. A truly effective regulatory framework must consider both the size and the risk profile of platforms, ensuring that those capable of causing significant harm face appropriate scrutiny regardless of their user numbers and are not able to do so. Anything less—as many of us across the House believe, including on these Benches—would bring into question whether the Government’s commitment to online safety is genuine. The Government should act decisively to close these regulatory gaps before more harm occurs in our increasingly complex online landscape. I beg to move.

Lords Debate Regulators : Who Watches the Watchdogs?

Recently the Lords held a debate on the report of the Industry and Regulators Select Committee (on which I sit) entitled "Who Watches the Watchdogs" about the scrutiny given to the performance independence and competence of our regulators

This is what I said. It was an opportunity as ever to emphasize that Regulation is not the enemy of innovation, or indeed growth, but can in fact, by providing certainty of standards, be the platform for it.

The Grenfell report and today’s Statement have been an extremely sobering reminder of the importance of effective regulation and the effective oversight of regulators. The principal job of regulation is to ensure societal safety and benefit—in essence, mitigating risk. In that context, the performance of the UK regulators, as well as the nature of regulation, is crucial.

In the early part of this year, the spotlight was on regulation and the effectiveness of our regulators. Our report was followed by a major contribution to the debate from the Institute for Government. We then had the Government’s own White Paper, Smarter Regulation, which seemed designed principally to take the growth duty established in 2015 even further with a more permissive approach to risk and a “service mindset”, and risked creating less clarity with yet another set of regulatory principles going beyond those in the Better Regulation Framework and the Regulators’ Code.

Our report was, however, described as excellent by the Minister for Investment and Regulatory Reform in the Department for Business and Trade under the previous Government, the noble Lord, Lord Johnson of Lainston, whom I am pleased to see taking part in the debate today. I hope that the new Government will agree with that assessment and take our recommendations further forward.

Both we and the Institute for Government identified a worrying lack of scrutiny of our regulators—indeed, a worrying lack of even identifying who our regulators are. The NAO puts the number of regulators at around 90 and the Institute for Government at 116, but some believe that there are as many as 200 that we need to take account of. So it is welcome that the previous Government’s response said that a register of regulators, detailing all UK regulators, their roles, duties and sponsor departments, was in the offing. Is this ready to be launched?

The crux of our report was to address performance, strategic independence and oversight of UK regulators. In exploring existing oversight, accountability measures and the effectiveness of parliamentary oversight, it was clear that we needed to improve self-reporting by regulators. However, a growth duty performance framework, as proposed in the White Paper, does not fit the bill.

Regulators should also be subject to regular performance evaluations, as we recommended; these reviews should be made public to ensure transparency and accountability. To ensure that these are effective, we recommended, as the noble Lord, Lord Hollick mentioned, establishing a new office for regulatory performance—an independent statutory body analogous to the National Audit Office—to undertake regular performance reviews of regulators and to report to Parliament. It was good to see that, similar to our proposal, the Institute for Government called for a regulatory oversight support unit in its subsequent report, Parliament and Regulators.

As regards independence, we had concerns about the potential politicisation of regulatory appointments. Appointment processes for regulators should be transparent and merit-based, with greater parliamentary scrutiny to avoid politicisation. Although strategic guidance from the Government is necessary, it should not compromise the operational independence of regulators.

What is the new Government’s approach to this? Labour’s general election manifesto emphasised fostering innovation and improving regulation to support economic growth, with a key proposal to establish a regulatory innovation office in order to streamline regulatory processes for new technologies and set targets for tech regulators. I hope that that does not take us down the same trajectory as the previous Government. Regulation is not the enemy of innovation, or indeed growth, but can in fact, by providing certainty of standards, be the platform for it.

At the time of our report, the IfG rightly said:

“It would be a mistake for the committee to consider its work complete … new members can build on its agenda in their future work, including by fleshing out its proposals for how ‘Ofreg’ would work in practice”.

We should take that to heart. There is still a great deal of work to do to make sure that our regulators are clearly independent of government, are able to work effectively, and are properly resourced and scrutinised. I hope that the new Government will engage closely with the committee in their work.

Lords Committee Highly Critical of Office for Students

Shortly before parliament dissolved for the General Election the Housse of Lords debated the Report of the Industry and Regulators Committee Must do better: the Office for Students and the looming crisis facing higher education

https://publications.parliament.uk/pa/ld5803/ldselect/ldindreg/246/24602.htm

This is is what I said:

I declare an interest as chair of the council of Queen Mary University of London. I thank the noble Baroness, Lady Taylor, for her comprehensive and very fair introduction to our report. I thank her too for her excellent marshalling of our committee, with the noble Lord, Lord Hollick, and I add my thanks to our clerks and our special adviser during the inquiry.

I will speak in general terms rather than specifically about my own university. In higher education, there have been challenges aplenty to keep vice-chancellors and governing bodies awake at night: coming through the pandemic, industrial relations, cost of living rises for our students, pensions and research funding, to name but a few. But above all there are the ever-eroding unit of resource for domestic students, which was highlighted extremely effectively by the noble Lord, Lord Johnson of Marylebone, on the “Today” programme last week, and the Government’s continual policy interventions, including, above all, their seeming determination to reduce overseas student numbers.

In the face of this, I have to keep reminding myself that in 2021-22 Queen Mary University delivered a total economic benefit to the UK economy of £4.4 billion. For every pound we spent in 2021-22, we generated £7 of economic benefit. Universities are some of our great national assets. They not only are intellectual powerhouses for learning, education and social mobility, making a huge contribution to their local communities, but are inextricably linked to our national prospects for innovation and economic growth.

The committee’s report was very well received outside this House. Commentating in Wonkhe, the higher education blog—I do not know whether there are many readers of it around; I suspect there are—on the government and OfS responses to our report, its deputy editor noted:

“If you were expecting a seasonal mea culpa from either the regulator or the government … on any of these, it is safe to say that you will be disappointed”.

For him, the four standout aspects of our report were:

“the revelations about the place of students in the Office for Students … the criticism of the perceived closeness of the independent regulator to the government of the day … the school playground

level approach to the Designated Quality Body question … and the less splashy but deeply concerning suggestion that OfS didn’t really understand the financial problems the sector was facing”.

As regards the DQB question, which the committee explored in some depth, the current approach being taken by the OfS is extremely opaque. We clearly need a regulatory approach to quality to align with international standards. It is clear that the quickest way to get the English system realigned with international good practice would be to reinstate the QAA—an internationally recognised agency. Most of us cannot understand what seems to be the implacable hostility of the OfS to the QAA.

It is notable that the OfS, perhaps stung by the committee’s report, has now belatedly woken up to the fragility of the sector’s financial model and the fact that the future of the overseas student intake is central to financial underpinning. In its 2023 report on the financial sustainability of HE providers, the OfS confirmed that the

“overreliance on international student recruitment is a material risk for many types of providers where a sudden decline or interruption to international fees could trigger sustainability concerns”

and

“result in some providers having to make significant changes to their operating model or face a material risk of closure”.

Advice that they need to change their funding model and diversify their revenue streams is not particularly helpful, given the options available.

The Migration Advisory Committee’s Rapid Review of the Graduate Route, published last week—which recommends retaining the graduate visa on its current terms and reports that the graduate route is achieving the objectives set for it by the Government, finding

“no evidence of any significant abuse”—

is therefore of crucial importance. There is absolutely no doubt about the importance of the work study visa to the sector and the broader UK economy. In answer to the question from the noble Lord, Lord Parekh, we want it, and I hope that the OfS will play its part in trying to persuade the Government to retain it.

The Wonkhe blog also asks the fundamental question about the Government’s response regarding the regulatory burden on higher education. I hope the Minister can tell us: do the Government think it necessary and acceptable to keep ratcheting up regulation on universities? We are going in the wrong direction. Additional resource is required to monitor and provide returns in a whole variety of areas, such as the new freedom of speech requirements.

With the extraordinary contribution that universities make to society, communities and the economy as a whole, will university regulation benefit from the proposals set out in Smarter Regulation: Delivering a Regulatory Environment for Innovation, Investment and Growth, the Government’s recent White Paper? We will discuss this in a future debate on the response to our subsequent report, Who Watches the Watchdogs? For instance, the White Paper proposes the adoption of

“a culture of world-class service”

in how regulators undertake their day-to-day activities, and the adoption by all government departments of the

“10 principles of smarter regulation”.

It says:

“All government departmental annual reports must also set out the total costs and benefits of each significant regulation introduced that year”,

and says that the Government will

“strengthen the role of the Regulatory Policy Committee and the Better Regulation Framework, improve the assessment and scrutiny of the costs of regulation, and encourage non-regulatory alternatives”.

It says:

“The government will launch a Regulators Council to improve strategic dialogue between regulators and government, alongside monitoring the effectiveness of policy and strategic guidance issued”.

Finally, it says that

“it is up to the government to better assess its regulatory agenda, to try to understand the cost of its regulation on business and society”.

What is not to like, in the context of higher education regulation? Will all this be applied to the work of the OfS?

That all said, I welcome some of the way in which the OfS, if not the Government, has responded to our report. There is an air approaching contrition, in particular regarding engagement with both students and higher education providers. I welcome the OfS reviewing its approach to student engagement, empowering the student panel to raise issues that are important to students and increasing engagement with universities and colleges to improve sector relations

As regards the Government, a dialling down of their rhetoric continually undermining higher education, a pledge to ration ministerial directions given to the OfS, and putting university finances on a more sustainable, long-term footing would be welcome.

It is clear that continued scrutiny and evaluation— I very much liked what the noble Baroness, Lady Taylor, had to say about post-scrutiny reporting—will be essential to ensure that both government and OfS actions after their responses effectively address the underlying issues raised in our report. Sad to say, I do not think that the sector is holding its breath in the meantime.

We Need a New Offence of Digital ID Theft

As part of the debates on the Data Protection Bill I recently advocated for a new Digital ID theft offence . This is what i said.

It strikes me as rather extraordinary that we do not have an identity theft offence. This is the Metropolitan Police guidance for the public:

“Your identity is one of your most valuable assets. If your identity is stolen, you can lose money and may find it difficult to get loans, credit cards or a mortgage. Your name, address and date of birth provide enough information to create another ‘you’”.

It could not be clearer. It goes on:

“An identity thief can use a number of methods to find out your personal information and will then use it to open bank accounts, take out credit cards and apply for state benefits in your name”.

It then talks about the signs that you should look out for, saying:

“There are a number of signs to look out for that may mean you are or may become a victim of identity theft … If you think you are a victim of identity theft or fraud, act quickly to ensure you are not liable for any financial losses … Contact CIFAS (the UK’s Fraud Prevention Service) to apply for protective registration”.

However, there is no criminal offence.

Interestingly enough, I mentioned this to the noble Baroness, Lady Morgan; Back in October 2022, her committee—the Fraud Act 2006 and Digital Fraud Committee—produced a really good report, Fighting Fraud: Breaking the Chain, which said:

“Identity theft is often a predicate action to the criminal offence of fraud, as well as other offences including organised crime and terrorism, but it is not a criminal offence. Cifas datashows that cases of identity fraud increased by 22% in 2021, accounting for 63% of all cases recorded to Cifas’ National Fraud Database”.

It goes on to talk about identity theft to some good effect but states:

“In February 2022, the Government confirmed that there were no plans to introduce a new criminal offence of identity theft as ‘existing legislation is in place to protect people’s personal data and prosecute those that commit crimes enabled by identity theft’”.

I do not think the committee agreed with that at all. It said:

“The Government should consult on the introduction of legislation to create a specific criminal offence of identity theft. Alternatively, the Sentencing Council should consider including identity theft as a serious aggravating factor in cases of fraud”.

The Government are certainly at odds with the Select Committee chaired by the noble Baroness, Lady Morgan. I am indebted to a creative performer called Bennett Arron, who raised this with me some years ago. He related with some pain how he took months to get back his digital identity. He said: “I eventually, on my own, tracked down the thief and gave his name and address to the police. Nothing was done. One of the reasons the police did nothing was because they didn’t know how to charge him with what he had done to me”. That is not a good state of affairs. Then we heard from Paul Davis, the head of fraud prevention at TSB. The headline of the piece in the Sunday Times was: “I’m head of fraud at a bank and my identity was still stolen”. He is top dog in this area, and he has been the subject of identity theft.

This seems an extraordinary situation, whereby the Government are sitting on their hands. There is a clear issue with identity theft, yet they are refusing—they have gone into print, in response to the committee chaired by the noble Baroness, Lady Morgan—and saying, “No, no, we don’t need anything like that; everything is absolutely fine”. I hope that the Minister can give a better answer this time around.

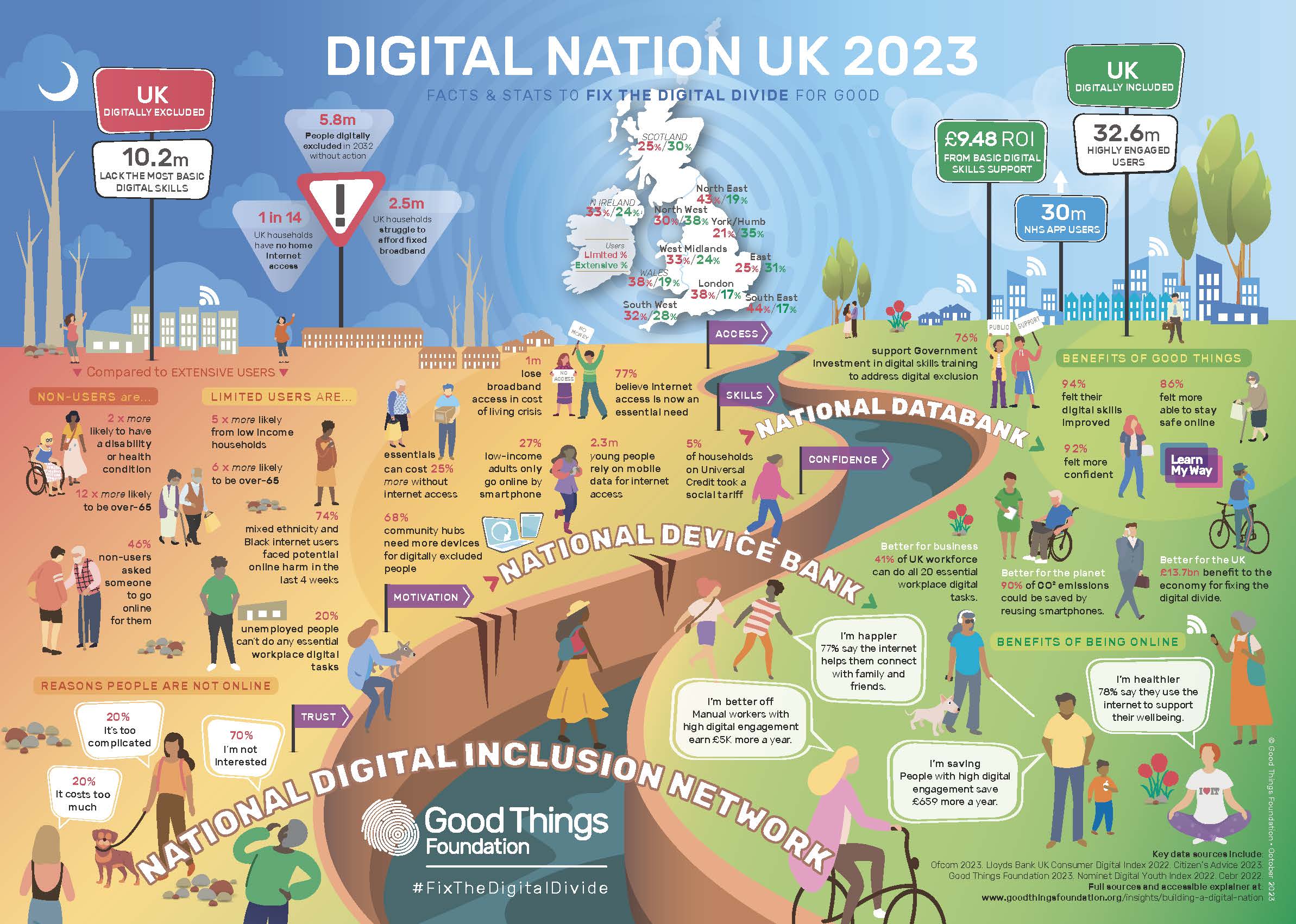

Lords call for action on Digital Exclusion

The House of Lords recently debated the Report of the Communication and Digital Committee on Digital exclusion . This is an editred version of what I said when winding up the debate.

Trying to catch up with digital developments is a never-ending process, and the theme of many noble Lords today has been that the sheer pace of change means we have to be a great deal more active in what we are doing in terms of digital inclusion than we are being currently.

Access to data and digital devices affects every aspect of our lives, including our ability to learn and work; to connect with online public services; to access necessary services, from banking, to healthcare; and to socialise and connect with the people we know and love. For those with digital access, particularly in terms of services, this has been hugely positive- as access to the full benefits of state and society has never been more flexible or convenient if you have the right skills and the right connection.

However, a great number of our citizens cannot get take advantage of these digital benefits. They lack access to devices and broadband, and mobile connectivity is a major source of data poverty and digital exclusion. This proved to be a major issue during the Covid pandemic. Of course the digital divide has not gone away subsequently—and it does not look as though it is going to any time soon.

There are new risks coming down the track, too, in the form of BT’s Digital Voice rollout. The Select Committee’s report highlighted the issues around digital exclusion. For example, it said that 1.7 million households had no broadband or mobile internet access in 2021; that 2.4 million adults were unable to complete a single basic task to get online; and that 5 million workers were likely to be acutely underskilled in basic skills by 2030. The Local Government Association’s report, The Role of Councils in Tackling Digital Exclusion, showed a very strong relationship between having fixed broadband and higher earnings and educational achievement, such as being able to work from home or for schoolwork.

To conflate two phrases that have been used today, this may be a Cinderella issue but “It’s the economy, stupid”. To borrow another phrase used by the noble Baroness, Lady Lane-Fox, we need to double down on what we are already doing. As the committee emphasised, we need an immediate improvement in government strategy and co-ordination. The Select Committee highlighted that the current digital inclusion strategy dates from 2014. They called for a new strategy, despite the Government’s reluctance. We need a new framework with national-level guidance, resources and tools that support local digital inclusion initiatives.

The current strategy seems to be bedevilled by the fact that responsibility spans several government departments. It is not clear who—if anyone—at ministerial and senior officer level has responsibility for co-ordinating the Government’s approach. Lord Foster mentioned accountability, and Lady Harding, talked about clarity around leadership. Whatever it is, we need it.

Of course, in its report, the committee stressed the need to work with local authorities. A number of noble Lords have talked today about regional action, local delivery, street-level initiatives: whatever it is, again, it needs to be at that level. As part of a properly resourced national strategy, city and county councils and community organisations need to have a key role.

The Government too should play a key role, in building inclusive digital local economies. However, it is clear that there is very little strategic guidance to local councils from central government around tackling digital exclusion. As the committee also stresses, there is a very important role for competition in broadband rollout, especially in terms of giving assurance that investors in alternative providers to the incumbents get the reassurance that their investment is going on to a level playing field. I very much hope that the Minister will affirm the Government’s commitment to those alternative providers in terms of the delivery of the infrastructure in the communications industry.

Is it not high time that we upgraded the universal service obligation? The committee devoted some attention to this and many of us have argued for this ever since it was put into statutory form. It is a wholly inadequate floor. We all welcome the introduction of social tariffs for broadband, but the question of take-up needs addressing. The take-up is desperately low at 5%. We need some form of social tariff and data voucher auto-enrolment. The DWP should work with internet service providers to create an auto-enrolment scheme that includes one or both products as part of its universal credit package. Also, of course, we should lift VAT, as the committee recommended, and Ofcom should be empowered to regulate how and where companies advertise their social tariffs.

We also need to make sure that consumers are not driven into digital exclusion by mid-contract price rises. I would very much appreciate hearing from the Minister on where we are with government and Ofcom action on this.

The committee rightly places emphasis on digital skills, which many noble Lords have talked about. These are especially important in the age of AI. We need to take action on digital literacy. The UK has a vast digital literacy skills and knowledge gap. I will not quote Full Fact’s research, but all of us are aware of the digital literacy issues. Broader digital literacy is crucial if we are to ensure that we are in the driving seat, in particular where AI is concerned. There is much good that technology can do, but we must ensure that we know who has power over our children and what values are in play when that power is exercised. This is vital for the future of our children, the proper functioning of our society and the maintenance of public trust. Since media literacy is so closely linked to digital literacy, it would be useful to hear from the Minister where Ofcom is in terms of its new duties under the Online Safety Act.

We need to go further in terms of entitlement to a broader digital citizenship. Here I commend an earlier report of the committee, Free For All? Freedom of Expression in the Digital Age. It recommended that digital citizenship should be a central part of the Government’s media literacy strategy, with proper funding. Digital education in schools should be embedded, covering both digital literacy and conduct online, aimed at promoting stability and inclusion and how that can be practised online. This should feature across subjects such as computing, PSHE and citizenship education, as recommended by the Royal Society for Public Health in its #StatusOfMind report as long ago as 2017.

Of course, we should always make sure that the Government provide an analogue alternative. We are talking about digital exclusion but, for those who are excluded and have the “fear factor”, we need to make sure and not assume that all services can be delivered digitally.

Finally, we cannot expect the Government to do it all. We need to draw on and augment our community resources; I am a particular fan of the work of the Good Things Foundation See their info graphic accompanying this) FutureDotNow, CILIP—the library and information association—and the Trussell Trust, and we have heard mention of the churches, which are really important elements of our local delivery. They need our support, and the Government’s, to carry on the brilliant work that they do.

We Can't Let this Disastrous Retained EU Law Bill go through in its current form

In the Lords we recently saw the arrival of the Retained EU (Law Revocation and Reform ) Bill. With its sunset clause threatening to phase out up to 4000 pieces of vital IP, environmental, consumer protection and product safety legislation on 31st December 2023 we need to drastically change or block it. This is what I said on second reading

I hosted a meeting with Zsuzsanna Szelényi, the brave Hungarian former MP, a member of Fidesz and the author of Tainted Democracy: Viktor Orbán and the Subversion of Hungary. I reflected that this Bill, especially in the light of the reports from the DPRRC and the SLSC, is a government land grab of powers over Parliament, fully worthy of Viktor Orbán himself and his cronies. This is no less than an attempt to achieve a tawdry version of Singapore-on-Thames in the UK without proper democratic scrutiny, to the vast detriment of consumers, workers and creatives. It is no surprise that the Regulatory Policy Committee has stated that the Bill’s impact assessment is not fit for purpose.

It is not only important regulations that are being potentially swept away, but principles of interpretation and case law, built up over nearly 50 years of membership of the EU. This Government are knocking down the pillars of certainty of application of our laws. Lord Fox rightly quoted the Bar Council in this respect. Clause 5 would rip out the fundamental right to the protection of personal data from the UK GDPR and the Data Protection Act 2018. This is a direct threat to the UK’s data adequacy, with all the consequences that that entails. Is that really the Government’s intention?

As regards consumers, Which? has demonstrated the threat to basic food hygiene requirements for all types of food businesses: controls over meat safety, maximum pesticide levels, food additive regulations, controls over allergens in foods and requirements for baby foods. Product safety rights at risk include those affecting child safety and regulations surrounding transport safety. Civil aviation services could be sunsetted, along with airlines’ liability requirements in the event of airline accidents. Consumer rights on cancellation and information, protection against aggressive selling practices and redress for consumer law breaches across many sectors could all be impacted. Are any of these rights dispensable—mere parking tickets?

The TUC and many others have pointed out the employment rights that could be lost, and health and safety requirements too. Without so much as a by-your-leave, the Government could damage the employment conditions of every single employee in this country.

For creative workers in particular, the outlook as a result of this Bill is bleak. The impact of any change on the protection of part-time and fixed-term workers is particularly important for freelance workers in the creative industries. Fixed-term workers currently have the right to be treated no less favourably than a comparable permanent employee unless the employer can justify the different treatment. Are these rights dispensable? Are they mere parking tickets?

Then there is potentially the massive change to intellectual property rights, including CJEU case law on which rights holders rely. If these fall away, it creates huge uncertainty and incentive for litigation. The IP regulations and case law on the dashboard which could be sunsetted encompass a whole range, from databases, computer programs and performing rights to protections for medicines. At particular risk are artists’ resale rights, which give visual artists and their heirs a right to a royalty on secondary sales of the artist’s original works when sold on the art market. Visual artists are some of the lowest-earning creators, earning between £5,000 and £10,000 a year. Are these rights dispensable? Have the Government formed any view at all yet?

This Bill has created a fog of uncertainty over all these areas—a blank sheet of paper, per Lord Beith; a giant question mark, per Lord Heseltine—and the impact could be disastrous. I hope this House ensures it does not see the light of day in its current form.